13.8.6. Macro “relauncherCodeFlowrateMPI.py”

13.8.6.1. Objective

The goal of this macro is to show how to handle a code run on several threads with another memory

paradigm: when the TThreadedRun instance is relying on shared memory (leading to possible

thread-safe problem, as discussed in TThreadedRun), the MPI implementation is

based on the separation of the memory. The communication is made through messages. In order to this,

the usual sequential runner will be removed and another runner will be called to do the job. The

flowrate code is provided with Uranie and has been also used and discussed throughout these

macros.

13.8.6.2. Macro

"""

Example of MPi usage for code launching

"""

from URANIE import DataServer, Relauncher, MpiRelauncher

import ROOT

# Create input attributes

rw = DataServer.TAttribute("rw")

r = DataServer.TAttribute("r")

tu = DataServer.TAttribute("tu")

tl = DataServer.TAttribute("tl")

hu = DataServer.TAttribute("hu")

hl = DataServer.TAttribute("hl")

lvar = DataServer.TAttribute("l")

kw = DataServer.TAttribute("kw")

# Create the output attribute

yhat = DataServer.TAttribute("yhat")

d = DataServer.TAttribute("d")

# Set the reference input file and the key for each input attributes

fin = Relauncher.TFlatScript("flowrate_input_with_values_rows.in")

fin.addInput(rw)

fin.addInput(r)

fin.addInput(tu)

fin.addInput(tl)

fin.addInput(hu)

fin.addInput(hl)

fin.addInput(lvar)

fin.addInput(kw)

# The output file of the code

fout = Relauncher.TFlatResult("_output_flowrate_withRow_.dat")

fout.addOutput(yhat)

fout.addOutput(d) # Passing the attributes to the output file

# Instanciation de mon code

mycode = Relauncher.TCodeEval("flowrate -s -r")

# mycode.setOldTmpDir()

mycode.addInputFile(fin)

mycode.addOutputFile(fout)

# Create the MPI runner

run = MpiRelauncher.TMpiRun(mycode)

run.startSlave()

if run.onMaster():

# Define the DataServer

tds = DataServer.TDataServer("tdsflowrate",

"Design of Experiments for Flowrate")

mycode.addAllInputs(tds)

tds.fileDataRead("flowrateUniformDesign.dat", False, True)

lanceur = Relauncher.TLauncher2(tds, run)

# resolution

lanceur.solverLoop()

tds.exportData("_output_testFlowrateMPI_py_.dat")

ROOT.SetOwnership(run, True)

run.stopSlave()

Here the first difference when comparing this macro to the previous one (see Macro) is the runner creation:

# Create the MPI runner

run = MpiRelauncher.TMpiRun(mycode)

The TThreadedRun object becomes a TMpiRun object whose construction only requests a pointer to

the assessor.

Another line is different as it is specific to the language: because of the way ROOT

and python deal with object destruction (though the garbage collector approach for the latter),

there is problem in the way one of the main key method for MPI treatment MPI_Finalize is called.

To prevent this from happening in python the following line should be added as soon as the runner

object is created:

ROOT.SetOwnership(run, True)

It allows ROOT to destroy the object injected, calling the finalize method in order for every slave to be properly released. Apart from that, the code is very similar, the only difference being the way to call this macro. It should not be run with the usual command:

python relauncherCodeFlowrateMPI.py

Instead, the command line should start with the mpirun command as such:

mpirun -np N python relauncherCodeFlowrateMPI.py

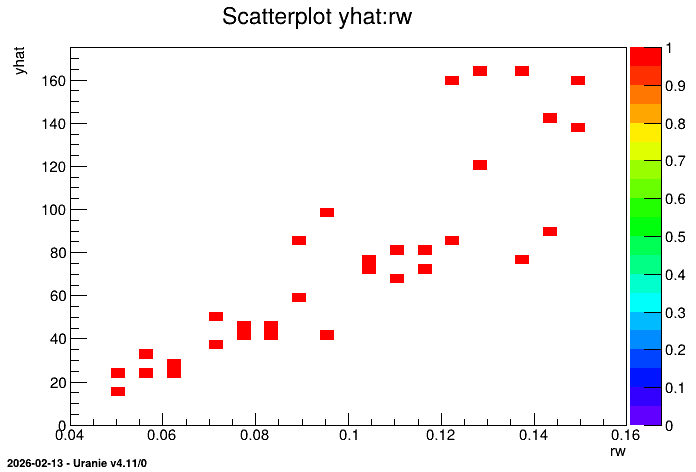

where the N part should be replaced by the number of requested threads. Once run, this macro also leads to the following plots.

Beware never to use the -i argument with the python command line as the macro would never end.

13.8.6.3. Graph

Figure 13.50 Representation of the output as a function of the first input with a colZ option